MLflow: AI Engineering Platform for LLMs, Agents, & Models

MLflow is the largest open source AI engineering platform for agents, LLMs, and ML models. MLflow enables teams of all sizes to debug, evaluate, monitor, and optimize production-quality AI applications while controlling costs and managing access to models and data. With over 30 million monthly downloads, thousands of organizations rely on MLflow each day to ship AI to production with confidence.

MLflow's comprehensive feature set for agents and LLM applications includes production-grade observability, evaluation, prompt management, an AI Gateway for managing costs and model access, and more. Learn more at MLflow for LLMs and Agents.

For machine learning (ML) model development, MLflow provides experiment tracking, model evaluation capabilities, a production model registry, and model deployment tools.

Getting Started with MLflow for ML Models

This page covers MLflow's tools for traditional machine learning and deep learning: ML experiment tracking, model versioning, model deployment, and model evaluation. If you're building agents and LLM applications, see MLflow for LLMs and Agents.

If this is your first time exploring MLflow for MLOps, the tutorials and guides here are a great place to start.

Quickstart

A quick guide to learn the basics of MLflow for MLOps by training a simple scikit-learn model

MLflow for Agents & LLMs

A walkthrough of MLflow's Agent and LLM capabilities, including tracing, evaluation, and prompt management

Deep Learning Guide

A hands-on tutorial on how to use MLflow for ML to track deep learning model training with PyTorch

MLflow for ML Models: Core Capabilities

MLflow for ML Models provides comprehensive support for traditional machine learning and deep learning workflows. From experiment tracking and model versioning to deployment and monitoring, MLflow streamlines every aspect of the ML lifecycle. Whether you're working with scikit-learn models, training deep neural networks, or managing complex ML pipelines, MLflow provides the tools you need to build reliable, scalable machine learning systems.

Explore the MLflow's machine learning capabilities and integrations below to enhance your ML development workflow!

- Tracking & Experiments

- Model Registry

- Model Deployment

- ML Library Integrations

- Model Evaluation

Track experiments and manage your ML development

Core Features

MLflow Tracking provides comprehensive experiment logging, parameter tracking, metrics visualization, and artifact management.

Key Benefits:

- Experiment Organization: Track and compare multiple model experiments

- Metric Visualization: Built-in plots and charts for model performance

- Artifact Storage: Store models, plots, and other files with each run

- Collaboration: Share experiments and results across teams

Guides

![]()

Manage model versions and lifecycle

Core Features

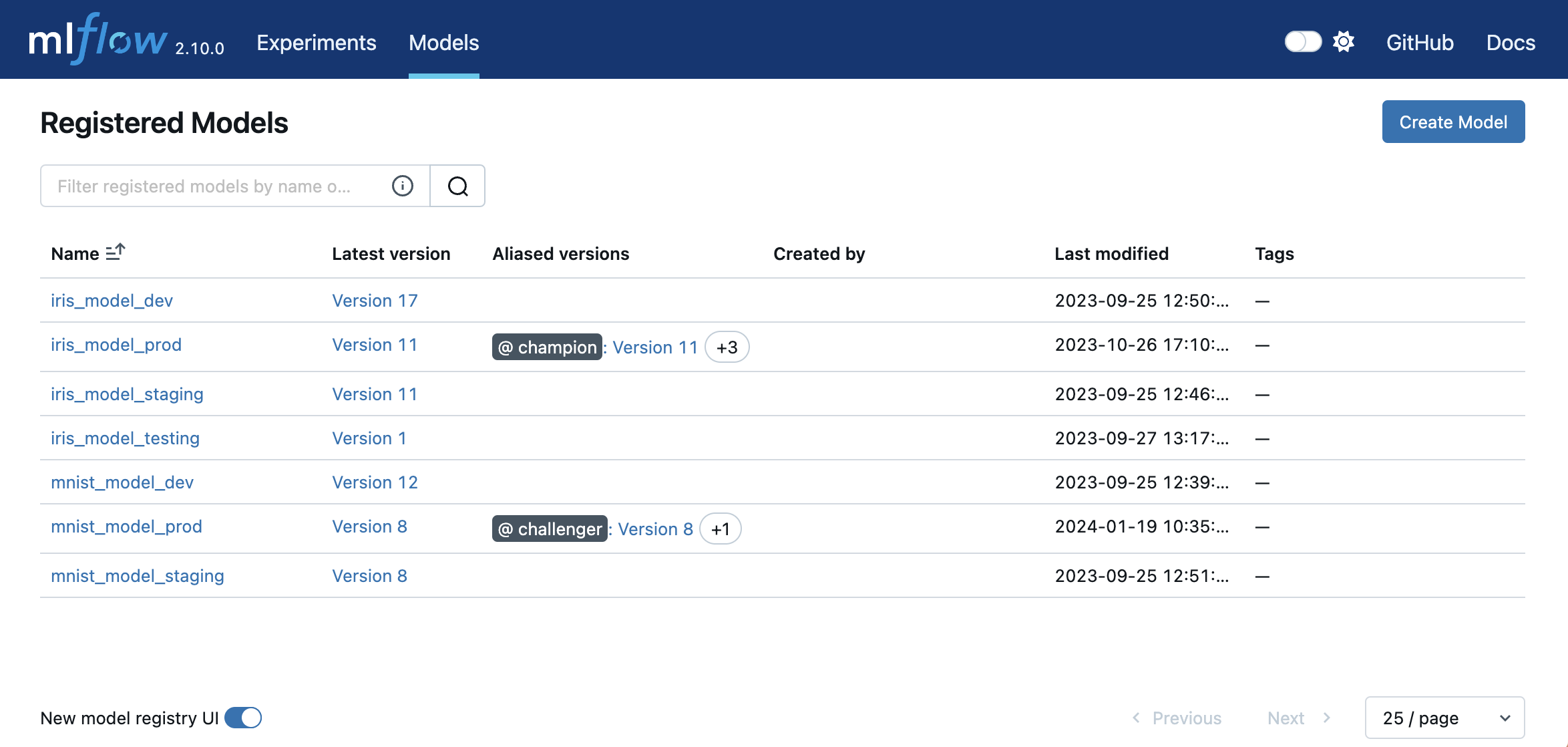

MLflow Model Registry provides centralized model versioning, stage management, and model lineage tracking.

Key Benefits:

- Version Control: Track model versions with automatic lineage

- Stage Management: Promote models through staging, production, and archived stages

- Collaboration: Team-based model review and approval workflows

- Model Discovery: Search and discover models across your organization

Guides

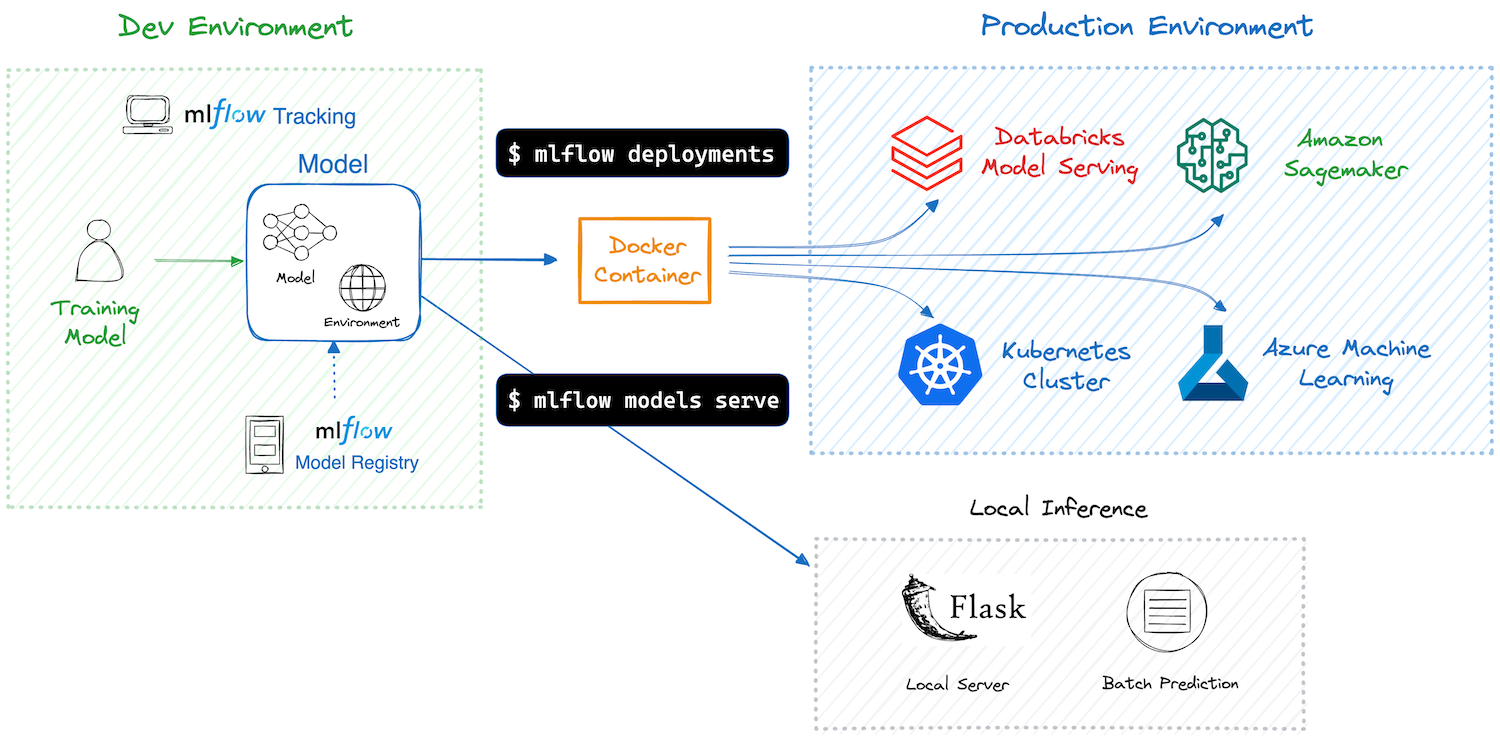

Deploy models to production environments

Core Features

MLflow Deployment supports multiple deployment targets including REST APIs, cloud platforms, and edge devices.

Key Benefits:

- Multiple Targets: Deploy to local servers, cloud platforms, or containerized environments

- Model Serving: Built-in REST API serving with automatic input validation

- Batch Inference: Support for batch scoring and offline predictions

- Production Ready: Scalable deployment options for enterprise use

Guides

Explore Native MLflow ML Library Integrations

Evaluate and validate your ML models

Core Features

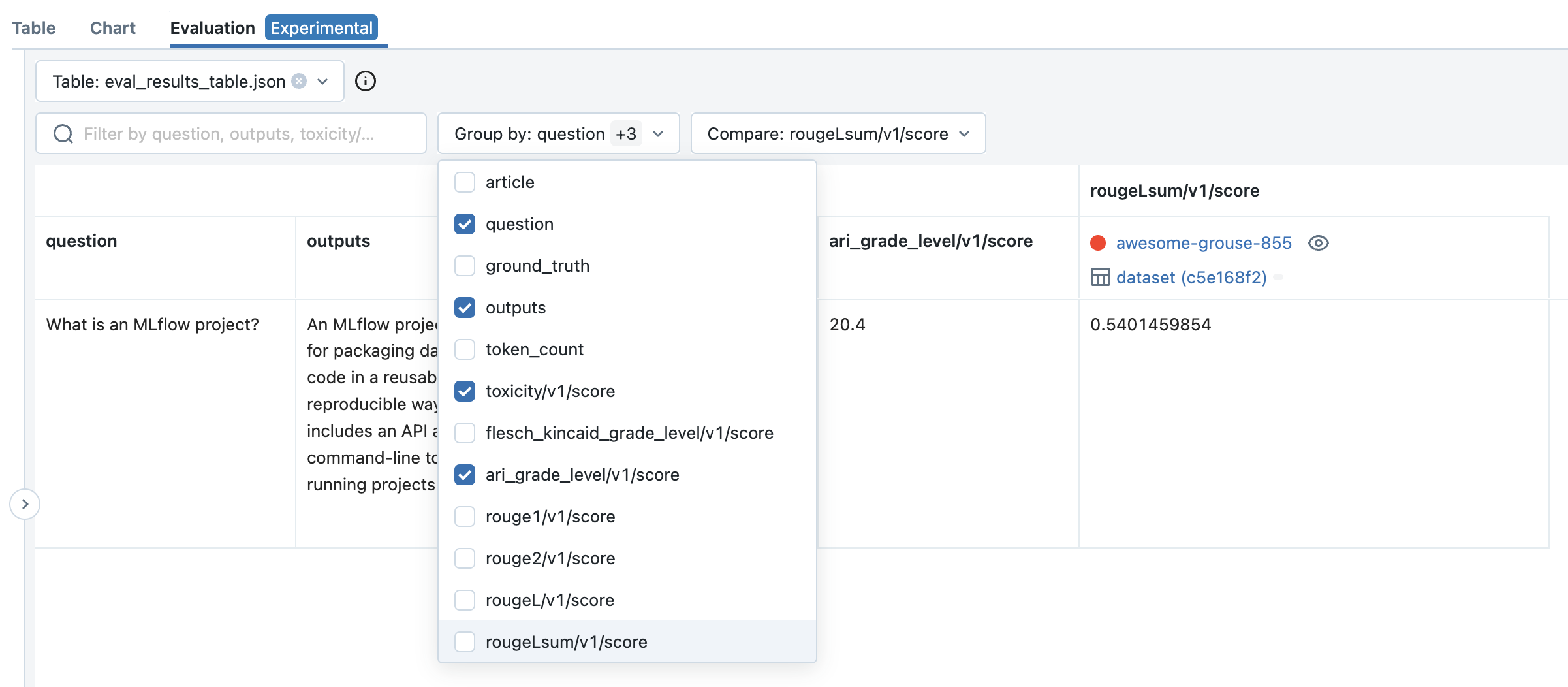

MLflow Evaluation provides comprehensive model validation tools, automated metrics calculation, and model comparison capabilities.

Key Benefits:

- Automated Metrics: Built-in evaluation metrics for classification, regression, and more

- Custom Evaluators: Create custom evaluation functions for domain-specific metrics

- Model Comparison: Compare multiple models and versions side-by-side

- Validation Datasets: Track evaluation datasets and ensure reproducible results

Guides

Learn how to evaluate your ML models with MLflow

Discover custom evaluation metrics and functions

Compare models with MLflow Model Validation

Running MLflow for ML Models Anywhere

MLflow can be used in a variety of environments, including your local environment, on-premises clusters, cloud platforms, and managed services. Being an open-source platform, MLflow is vendor-neutral; whether you're building AI agents, LLM applications, or ML models, you have access to MLflow's core capabilities — tracing, evaluation, experiment tracking, deployment, and more.